March 12th 2019 celebrates 30 years of the history of the web. From the first web page to the way humans connect, communicate, spend and live their everyday lives, we take a look at the major events that have shaped our lives over the past 30 years…

Table of Contents

A Timeline Infographic: Looking Back at the First 30 Years of the World Wide Web

Please include attribution to appinstitute.com with this graphic.

The Proposal

Nowadays it is common for people to use the terms web and internet interchangeably even though they refer to two distinct technologies. The internet is the network that the World Wide Web – or web for short – operates on and has been around in one form or another since the late sixties. The web, on the other hand, has only been online for 28 years, with the idea for the web being 30 years old. It was in March 1989 that Tim Berners-Lee, while working at CERN, that he wrote Information Management: A Proposal, which included “[…] a solution based on a distributed hypertext system”. As Berners-Lee describes it:

Most of the technology involved in the web, like the hypertext, like the internet, multifont text objects, had all been designed already. I just had to put them together. It was a step of generalising, going to a higher level of abstraction, thinking about all the documentation systems out there as being possibly part of a larger imaginary documentation system.

His manager at the time calls it “vague, but exciting”, and encourages him to explore the ideas further, and over the next 18 months he creates many of the tools we still use on the web, including HTTP, HTML, a browser and WYSIWYG editor for web pages, and later the URL structure we’re all familiar with. The first web server went online at CERN in December 1990, but it wasn’t until the following August that Berners-Lee shared details of the web server and the WWW Browser-Editor to newsgroups and published the first publicly accessible web page.

It is quite acceptable to suggest that since Berners-Lee used tools that had already been created by other people, if he didn’t put it all together to create the web, someone else would have. But we aren’t here to speculate about what the World Wide Web might have been under the guidance of someone else, but to rather celebrate Tim Berners-Lee’s version of it: how it has changed the world, the way in which we look for and share information, and how we communicate with friends, family, and perfect strangers. And one of the first monumental decisions taken in getting us to where we are today was that of releasing the web technology and program code into the public domain early in 1993, making it easier for people to copy, distribute, and make changes. This also made it possible for people to get a better understanding of how the underlying technology worked, so they could go about creating other tools that worked with it.

Just Browsing

Whether you’re surfing the web, or just browsing the web, having software that makes the whole process a little easier is important, and the first browser to make the web more accessible to average users was announced and released in 1993.

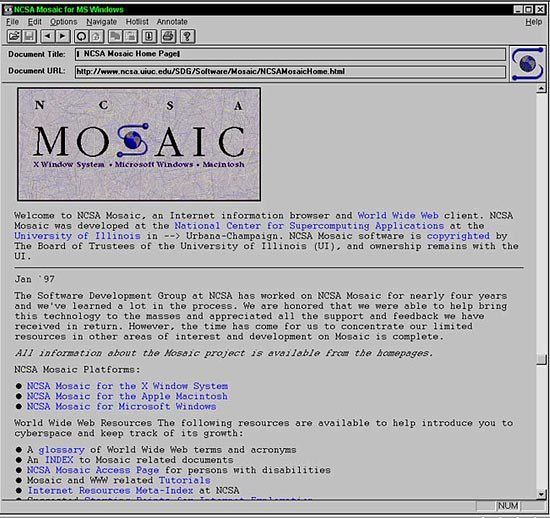

NCSA Mosaic home page displayed in the NCSA Mosaic browser

It was called Mosaic, and although it was originally designed for Unix operating systems, it also had ports for Microsoft Windows and Classic Mac OS. It used a graphical user interface (GUI) that made it easier to operate and was the first browser to display images inline with text. Unfortunately, one of the original developers, Marc Andreessen, soon left the project for Mosaic Communications Corporation – which would eventually be rebranded as Netscape Communications Corporation. Although it was originally based on the Mosaic browser, Netscape – or Netscape Navigator – rapidly overtook Mosaic, and for a few years was the most popular browser for internet users, and introduced many features still found in browsers today. The team behind Netscape is also credited with giving us JavaScript and SSL, but thanks to a brief browser war with Microsoft’s Internet Explorer, the Netscape browser no longer exists and is unknown to a younger generation. The browser war that began with Netscape and Internet Explorer (IE) continued well into the new millennium, first with Firefox – an offshoot of Netscape – pitted against IE, and then Google’s own Chrome browser, which was first released in 2008, and has been the dominant browser since mid-2012.

The browser wars affected developers far more than it affected internet users since, although Tim Berners-Lee had started the World Wide Web Consortium (W3C) in 1994, the way in which Netscape and Internet Explorer implemented or supported the standards put forth by the W3C differed tremendously. Although you will still occasionally find differences today in how different browsers render a webpage, the issue was much more prolific and pronounced in the early years of the web, and it was very common to have web sites include statements about the site being best viewed in Internet Explorer. Earlier versions of IE had a terrible track record in terms of standards adherence, but since it was the dominant browser at the time, developers had little choice but to ensure their website looked good in IE, either settling for it not looking so good in Netscape (and later Firefox), or employing various hacks to have it work reasonably well in all popular browsers. The W3C still exists and is now responsible for maintaining many more protocol standards with the technology used on the web having evolved over the years.

What Are You Looking For?

Search engines were another important development that allowed the web to become a popular tool, rather than a niche one. Part of what drove Berners-Lee’s initial proposal was making information more accessible, and this isn’t possible if you have no easy way of finding the information you are looking for. The first example of a search engine – Archie – predated the first public web server and web page by almost a year, but it had been designed for rudimentary indexing of FTP archives. What would eventually become Yahoo! was started in early 1994, but it was little more than a manually curated directory of interesting websites, making WebCrawler the first full-text website that would eventually influence other search engines. It started out with around 4,000 websites in its database but had already served its millionth query within seven months of its launch.

WebCrawler home page in 1995

WebCrawler was also one of the first search engines that allowed users to search for any word or term on a web page, something that has become the foundation for all popular search engines since. More than 80 web search engines have been launched since 1993, and though Google now enjoys the lion’s share of the search market, Yahoo! was the dominant search brand in the early years of the web, with a few other brands also being quite popular for brief periods of time. But the way in which websites are crawled, indexed, and searched has matured considerably, largely thanks to Google’s approach to indexing and ranking sites that favoured an emphasis on relevance, rather than just the presence of certain keywords.

“Looking to the future, I expect Google to continue to monetize more real estate in search results. For organic search engine optimization, I suggest offering real value to your visitors.” – Bradley Shaw

Search has also become more intelligent, with the ability to search using queries that are more closely aligned with speech than just a combination of a few keywords. Google was also very successful at monetising search, launching AdWords in 2000, the same year the dot-com bubble burst. AdWords wasn’t the first service to offer brands the ability to appear at the top of specific search results, but again for Google, the priority was the relevance of the advertiser to the search term. Though Google has diversified into a number of different fields, including browsers and operating systems, advertising is still the biggest contributor of revenue.

Getting Social

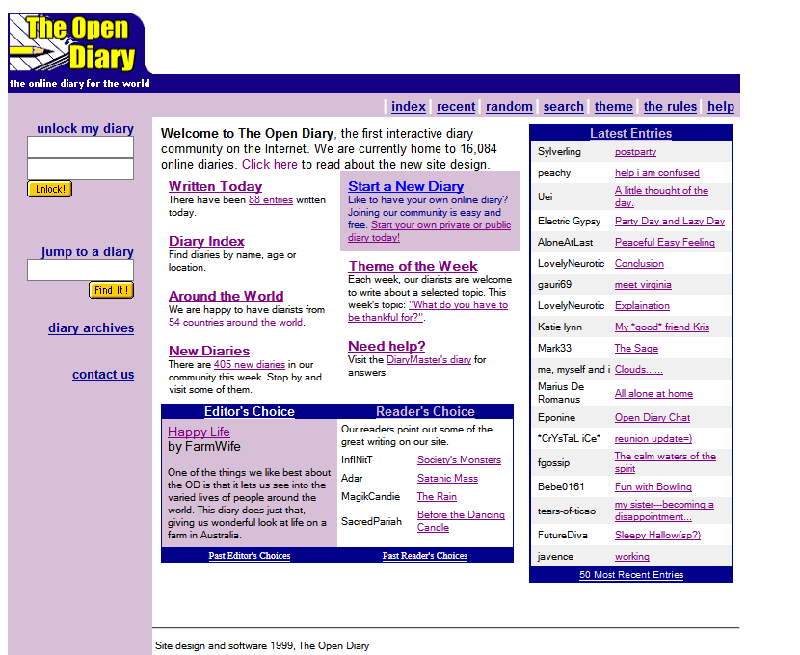

The late nineties saw a number of different platforms launching that would contribute to the popularity of blogging or online diaries as they were styled at first. The first popular platform was Open Diary in 1998, followed by LiveJournal and Blogger in 1999. WordPress, now the most popular content management system with blogging features, would only launch in 2003.

The Open Diary’s home page in 1999 – Source

These diary platforms revealed how eager people were to share details of their lives, thoughts, activities, and more with perfect strangers who would, sometimes, end up becoming friends. Open Diary added the ability for visitors to leave comments on any posts, making diaries and blogs more social, and the launch of Friendster in 2002 marked the beginning of a much more social web. Though it no longer exists, Friendster was the first of a new generation of social networking services, followed by MySpace, Hi5, and Facebook, and the first such service to reach more than a million users. With more than two billion active users, Facebook is still the leading social network platform, even though during the first two years of its existence membership was limited to certain US universities, colleges, and schools only.

“I think privacy, obviously, is going to become one of the key issues. The big social networks will either fracture from government regulation or users getting tired of being mobbed upon by strangers.” – Dan Martino

There are now more than twenty social networking services with more than a hundred million active users, but most of them are quite different in the way they operate, and the audience they appeal to, with YouTube, Reddit, LinkedIn, and WhatsApp all considered to be social networking services.

August 2007 would change the way we used the pound/hash sign when product designer and technologist Chris Messina transformed the way conversations could be grouped, curated and searched for when he invented the hashtag.

“Prior to the hashtag, people used online groups and forums to have topical conversations, or would use freeform tags to label their content to make it easier for others to find media like photos. We also hung out in real-time chat rooms using a technology called IRC. Each of these behaviors inspired the thinking and design of the hashtag.” – Chris Messina

Most social networks have faced some form of criticism, ranging from privacy concerns, through to how they manage online bullying, and the spread of hateful content. But most have also only grown in popularity, proving to be quite resilient to criticism.

Making the Web Mobile

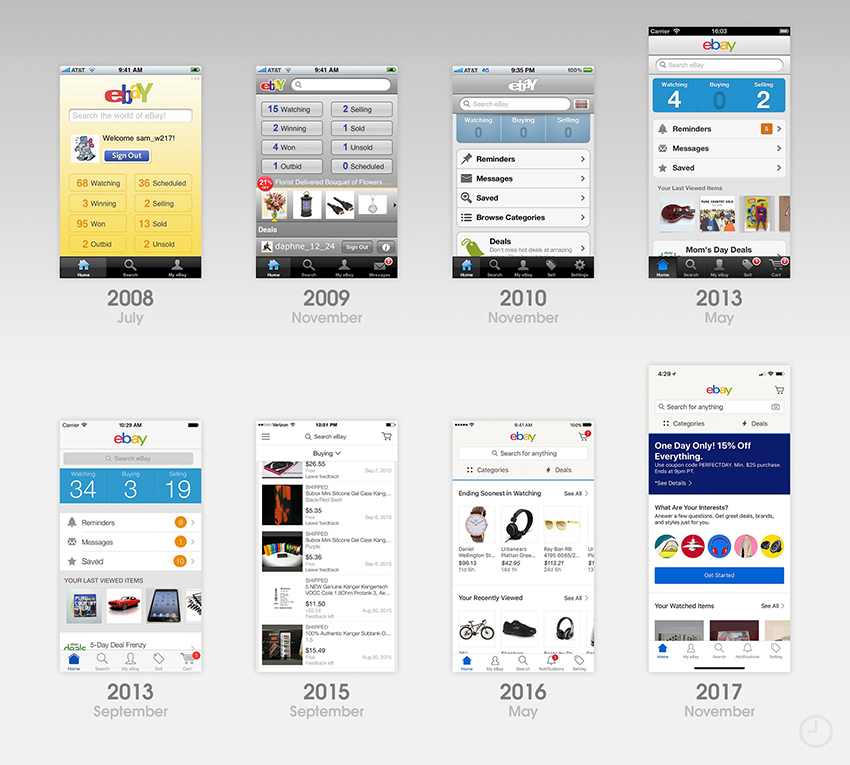

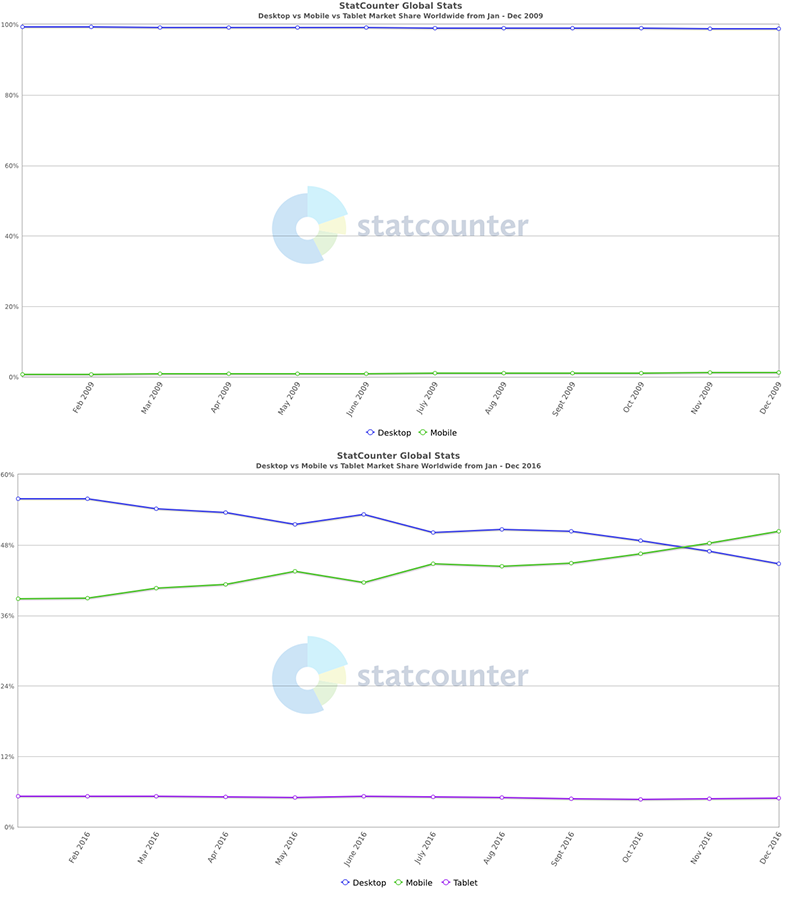

Although mobile phones predate the web, full internet service via a mobile phone only became possible in the late nineties. Even then the experience was far removed from what we are used to today and using a mobile phone to browse the web was more of a last resort rather than a deliberate choice. This all changed following the release of the first iPhone in 2007, followed a year later by the first Android device, with both bringing about a change in how we were able to access the internet on a mobile device. Screens were bigger, and as more websites began using responsive or adaptive techniques, browsing a website on a mobile device was not too different to doing so on a computer. But both iOS and Android also allowed for the use of third-party apps, and the app stores for both operating systems launched in 2008, each with fewer than 3,000 apps in the first year, but now offering access to more than 2-million apps each. As with websites when the web first became public, the first apps for iOS and Android were quite rudimentary compared with what we now have, both in design and functionality.

Evolution of the Ebay app for iOS – Source

This is understandable as websites have benefitted from new technology and the natural progression of HTML, CSS, JavaScript and other web related technologies, and so too have mobile apps.

The impact of all this is most notable when comparing how people access the internet, with less than two percent of people using a mobile phone to access the web in 2009, and more than forty percent by early 2016.

Entertain Me

Arguably the biggest change to business models and user behaviour brought about by the World Wide Web has occurred within the entertainment sector; from how we access news, read books, and listen to music, to how we watch TV and movies. But while users embraced – becoming a major driving force to the change – this, the pace of the change, and the ability to monetise it, has not been easy for content producers. Regular print media has had a particularly tough time in keeping up, watching circulation numbers steadily decline as their audience switched to sourcing news and articles on the web.

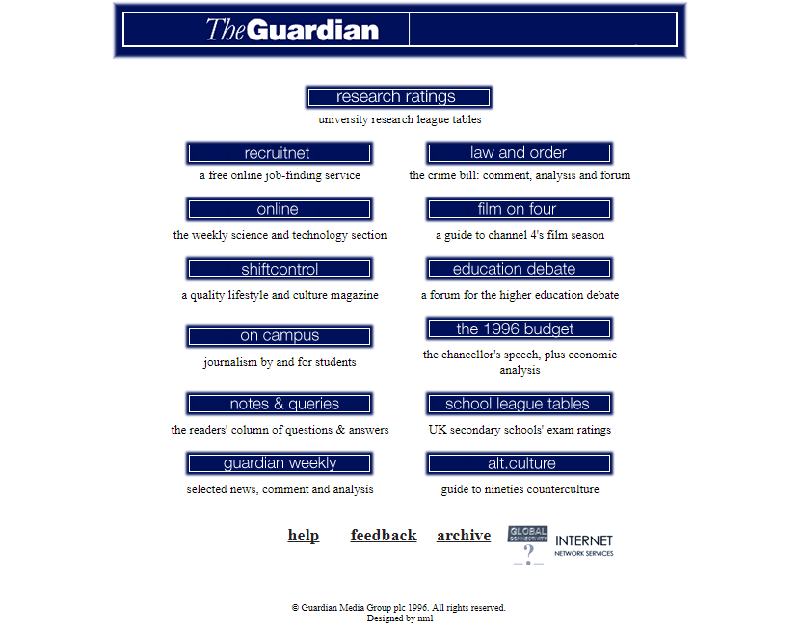

The Guardian’s home page in 1996 – Source

Attempts to monetise the electronic distribution of news through advertising and various subscription-based models have proven to be less successful, with the ability to block ads in browsers being quite easy, along with ways to circumvent paywalls. Then, in 2007, many publishing houses were almost blindsided by the release of the first Amazon Kindle, and there was considerable fretting about a decline in sales of print books. But the death of print books never materialised, and while eBooks now account for twenty-five percent of all books sold, year-on-year sales growth has declined in some markets, with younger readers favouring print books over digital.

Something else that caught many people off guard was the release of Napster in 1999, which made peer-to-peer sharing of MP3 files incredibly easy, even if it was illegal. Being able to buy and download songs and albums online was already possible, but not widely available, and further hindered by a very limited catalogue of digital music. Despite popularising music piracy, Napster also showed record labels that there was a large market for downloadable music, especially one where users could purchase individual songs instead of a full album. The first popular digital music marketplace – the iTunes Store – launched in 2003, with five major record labels offering music through the service, and by 2016 the iTunes Store was the largest music vendor in the world. It was only natural that other forms of digital media piracy – or file sharing – would follow what Napster started, with second generation P2P services such as Kazaa and Gnutella allowing users to share movies, TV shows, games, and even software. And as with music, the rise in other forms of piracy forced media and entertainment companies to also reconsider their business model, and begin offering digital downloads through the iTunes Store, and other digital marketplaces.

Netflix home page in 2009 – Source

But the push for different ways of accessing and consuming entertainment didn’t end there, with music streaming coming first, followed by Netflix’s transition from delivering DVDs to streaming TV shows and movies. In 2008, about 0.9 million American households only watched television online, but that had grown to 22.2 million households relying entirely on the Internet for television viewing by 2017.

You’re the Product

Way back in 2010 a user on MetaFilter commented, “If you are not paying for it, you’re not the customer; you’re the product being sold”. It is an idea that is paraphrased frequently, and forgotten almost immediately as Google, Facebook, and hundreds of other brands on the web deal with one privacy-related concern, only for another privacy matter to be exposed weeks or months later. No business can operate by giving away their services or products for free, and while there has long been a reluctance to pay for services offered on the web, there are a number of SaaS and PaaS products that work quite well using a subscription model. But the likes of Google, Facebook, Twitter, and a number of other brands in the social networking field have always offered some of their products and services free of charge to end users, without giving up on the idea of monetising their product.

Which is when users become the product, with anonymous bits of their private information, demographics, and behaviour on the platform sold off to advertisers, so that the advertisers are better able to target who sees their adverts. On the surface, it isn’t such a bad deal, but unfortunately, when you’re the product you also have little control over what personal data advertisers have access to, and have to trust that Facebook, Google, and others behave ethically. And every now and then they don’t, as evidenced by the Cambridge Analytica scandal that beset Facebook in early 2018, forcing them to change certain internal policies, and take out full-page advertisements apologising for the misstep. But that wasn’t the only scandal Facebook faced pre-2018, or since. While it is obvious that some brands are trying to find better ways of managing your privacy without affecting their revenue streams, governments around the world are starting to question whether it is enough, with the GDPR coming into effect through most of Europe in 2018. Even though the UK was already in the middle of Brexit talks and preparations, their own Data Protection Act is largely modelled on the GDPR, which aims to put strict processes in place for the management, storage, and use of personal data.

“I’m fairly confident data protection issues will become increasingly more prevalent in business practices, politics, and the media. In fact, it’s already happening now. World leaders are already stepping up and making soft agreements in creating more ethical and secure data protection and cybersecurity policies.” – Jamie Cambell

An important caveat of the GDPR and DPA is that while it applies to most of Europe and the UK, it doesn’t consider where your company is based, only if you do business with an EU or UK based person or business, so even a small online business operating out of a small warehouse in Boise, Idaho, would need to be GDPR compliant if any of their products are bought by someone in Europe or the UK.

The Future

It’s difficult to imagine what the web will be in another 30 years when you consider how much has changed since it was first made accessible to the public. In the early years, technology and connection speeds often meant that even simple images could take many seconds – if not minutes – to be rendered and downloading a small video could take hours. Now we easily give up on a website if it hasn’t fully loaded within four seconds, and have no difficulty watching movies in high-definition, streamed in real time. But it is possible to surmise that over the next few years we may find more regulatory requirements being imposed on certain web services, particularly those operating within the social networking sector, such as Facebook, YouTube, and Twitter. All of these have faced considerable criticism almost since they were first launched, sometimes relating to privacy concerns, and other times relating to how easy they make it for hoaxes and false information to be distributed. In the first three months of 2019 YouTube has already announced that they would in future recommend fewer videos that could misinform users in harmful ways, followed by a decision to remove ads from videos with anti-vaccination content. Any future regulation, whether self-imposed or mandated, will certainly affect how the web continues to evolve, and just like the first thirty years, the next thirty will surely be exciting.

Last Updated on September 2, 2024 by Ian Naylor

25 thoughts on “History of the Web Timeline Infographic: Celebrating 30 Years of the World Wide Web”